28 May 2025

SheCanCode x Joann DeLanoy - Eight top tips for improving your soft skills at work

Industry Press

Leadership

26 May 2025

Creative Bloq x Tom Dunn - 8 AI skills you need to land your dream design job, according to the pros

Industry Press

Leadership

Insights

22 May 2025

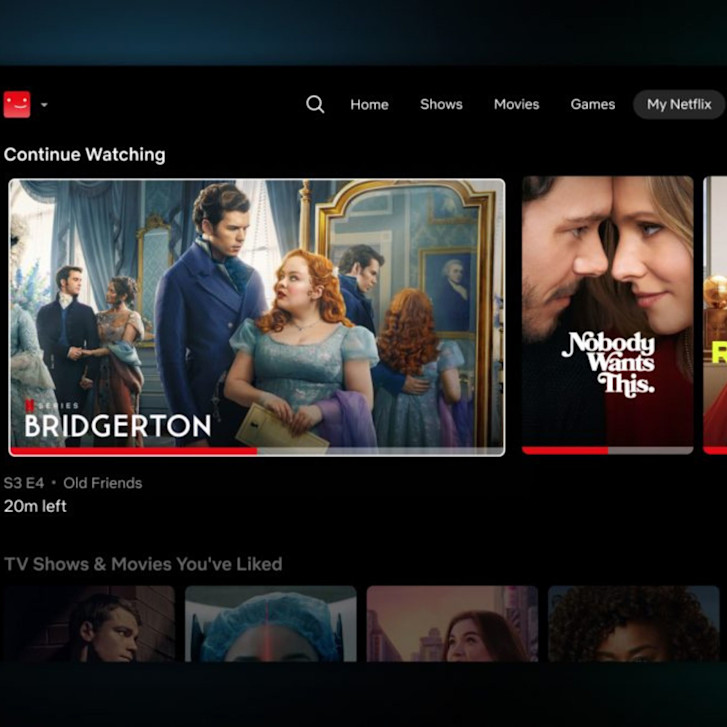

LBB Online x Tershari Johns - Soft Power Play: How Brands Can Authentically Enter the World of Cosy Games

Industry Press

Insights

22 May 2025

Big ideas, Gen Z vibes: Inside Toaster’s women-led creative culture

Industry Press

Leadership

20 May 2025

Toaster appoints Divyanshu Bhadoria as Chief Strategy Officer

Industry Press

Wins

Leadership

14 May 2025

Toaster INSEA's Content Studio Launches Axis My India’s ‘A’ App with an AI twist

Industry Press

Work

05 May 2025

LBB Online x Ira G - Copywriting Lessons from Poets, Playwrights and Novelists

Industry Press

Leadership

Insights